CREDIT

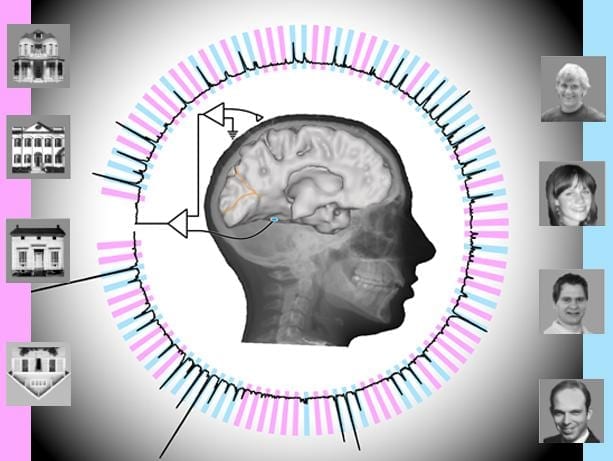

Illustration by Kai Miller and Brian Donohue

Electrodes in patients’ temporal lobes carry information that, when analyzed, enables scientists to predict what object patients are seeing

Using electrodes implanted in the temporal lobes of awake patients, scientists have decoded brain signals at nearly the speed of perception. Further, analysis of patients’ neural responses to two categories of visual stimuli – images of faces and houses – enabled the scientists to subsequently predict which images the patients were viewing, and when, with better than 95 percent accuracy.

The research is published today in PLOS Computational Biology.

University of Washington computational neuroscientist Rajesh Rao and UW Medicine neurosurgeon Jeff Ojemann, working their student Kai Miller and with colleagues in Southern California and New York, conducted the study.

“We were trying to understand, first, how the human brain perceives objects in the temporal lobe, and second, how one could use a computer to extract and predict what someone is seeing in real time?” explained Rao. He is a UW professor of computer science and engineering, and he directs the National Science Foundation’s Center for Sensorimotor Engineering, headquartered at UW.

“Clinically, you could think of our result as a proof of concept toward building a communication mechanism for patients who are paralyzed or have had a stroke and are completely locked-in,” he said.

The study involved seven epilepsy patients receiving care at Harborview Medical Center in Seattle. Each was experiencing epileptic seizures not relieved by medication, Ojemann said, so each had undergone surgery in which their brains’ temporal lobes were implanted – temporarily, for about a week – with electrodes to try to locate the seizures’ focal points.

“They were going to get the electrodes no matter what; we were just giving them additional tasks to do during their hospital stay while they are otherwise just waiting around,” Ojemann said.

Temporal lobes process sensory input and are a common site of epileptic seizures. Situated behind mammals’ eyes and ears, the lobes are also involved in Alzheimer’s and dementias and appear somewhat more vulnerable than other brain structures to head traumas, he said.

In the experiment, the electrodes from multiple temporal-lobe locations were connected to powerful computational software that extracted two characteristic properties of the brain signal: “event-related potentials” and “broadband spectral changes.”

Rao characterized the former as likely arising from “hundreds of thousands of neurons being co-activated when an image is first presented,” and the latter as “continued processing after the initial wave of information.”

The subjects, watching a computer monitor, were shown a random sequence of pictures – brief (400 millisecond) flashes of images of human faces and houses, interspersed with blank gray screens. Their task was to watch for an image of an upside-down house.

“We got different responses from different (electrode) locations; some were sensitive to faces and some were sensitive to houses,” Rao said.

The computational software sampled and digitized the brain signals 1,000 times per second to extract their characteristics. The software also analyzed the data to determine which combination of electrode locations and signal types correlated best with what each subject actually saw.

In that way it yielded highly predictive information.

By training an algorithm on the subjects’ responses to the (known) first two-thirds of the images, the researchers could examine the brain signals representing the final third of the images, whose labels were unknown to them, and predict with 96 percent accuracy whether and when (within 20 milliseconds) the subjects were seeing a house, a face or a gray screen.

This accuracy was attained only when event-related potentials and broadband changes were combined for prediction, which suggests they carry complementary information.

“Traditionally scientists have looked at single neurons,” Rao said. “Our study gives a more global picture, at the level of very large networks of neurons, of how a person who is awake and paying attention perceives a complex visual object.”

The scientists’ technique, he said, is a steppingstone for brain mapping, in that it could be used to identify in real time which locations of the brain are sensitive to types of information.

Read more: Scientists decode brain signals nearly at speed of perception

The Latest on: Brain mapping

[google_news title=”” keyword=”brain mapping” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Brain mapping

- Mapping brain function, safer autonomous vehicles are focus of Schmidt Transformative Technology fundon May 8, 2024 at 8:58 am

Two projects — one that maps the function of the brain’s neuronal network in unprecedented detail and another that combines robotics and light-based computer circuits to create safe self-driving ...

- Scientists Develop a Brain 'Map of Consciousness'on May 3, 2024 at 12:38 am

Now, scientists have just brought us one step closer to finding out with a new 'map of consciousness' – and their results could help to wake up coma patients.

- IU Health and Riley Children's Health introduce MEG brain mapping technologyon May 2, 2024 at 7:20 am

Yesterday marked a significant milestone with an inaugural MEG patient, heralding a new era in diagnostic capabilities for Hoosiers and others across the Midwest with neurological conditions.

- Brain imaging study reveals connections critical to human consciousnesson May 1, 2024 at 5:38 pm

A new study involved high-resolution scans that enabled the researchers to visualize brain connections at submillimeter spatial resolution. Together, these pathways form a 'default ascending arousal ...

- Higher Resolution Brain Mapping Tech Wins Big at Research Expoon April 25, 2024 at 11:21 am

Research Expo is unique in that graduate students are judged not just on the technical merits of their research, but also on their ability to effectively communicate its impact to a non-technical ...

- Brain microdisplay: New device maps brain in real-time during surgeryon April 25, 2024 at 9:54 am

The device combines LEDs and an electrode grid to light up the brain during surgery. It will provide surgeons with real-time visual guidance.

- Brain Health Announces Integration of QEEG Brain Mapping and Neurofeedback in Mental Health Treatmentson April 23, 2024 at 10:48 am

Houston, Texas--(Newsfile Corp. - April 23, 2024) - Leading mental health firm Brain Health has announced that several of its treatment approaches now include QEEG Brain Mapping and Neurofeedback ...

- Brain recordings in people before surgery reveal how all minds plan what to say prior to speakingon March 18, 2024 at 5:00 pm

the findings come from an analysis of hundreds of brain-mapping recordings made on 16 patients between the ages of 14 and 43 preparing for surgery to treat epilepsy at NYU Langone Health between ...

- Individualized brain mapping techniques for tailored neuromodulation strategies (IMAGE)on February 6, 2024 at 9:57 am

brain mapping techniques pave the way for more personalized and effective therapeutic interventions, addressing the limitations of standard approaches and advancing tailored treatments.

- Mapping the mouse brainon June 26, 2022 at 9:55 am

Paul Allen has big plans for neuroscience. In September the Microsoft cofounder—ranked fourth on the Forbes list of the world's richest people—announced the donation of $100 million over five ...

via Bing News