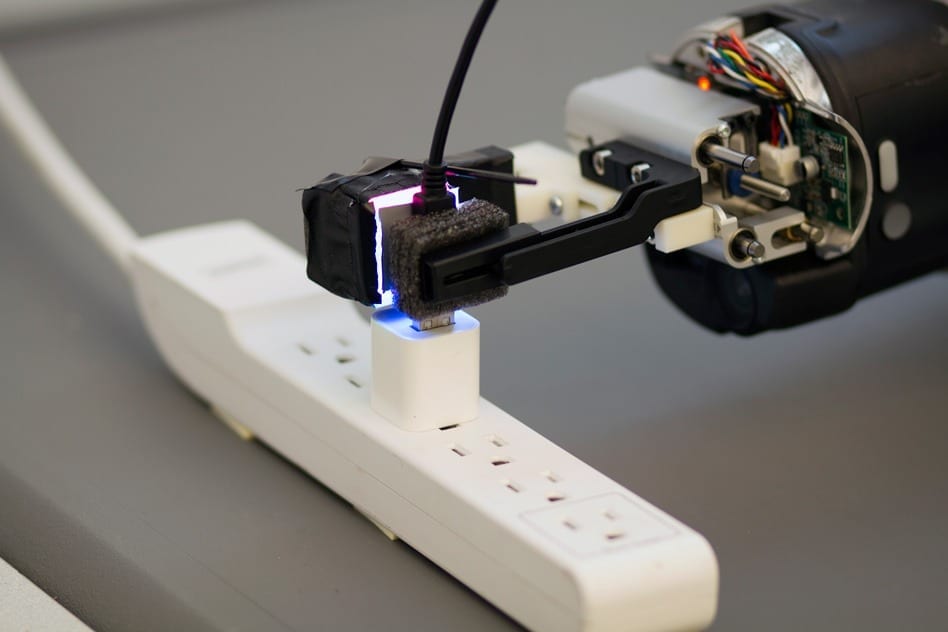

Equipped with a novel optical sensor, a robot grasps a USB plug and inserts it into a USB port.

Researchers at MIT and Northeastern University have equipped a robot with a novel tactile sensor that lets it grasp a USB cable draped freely over a hook and insert it into a USB port.

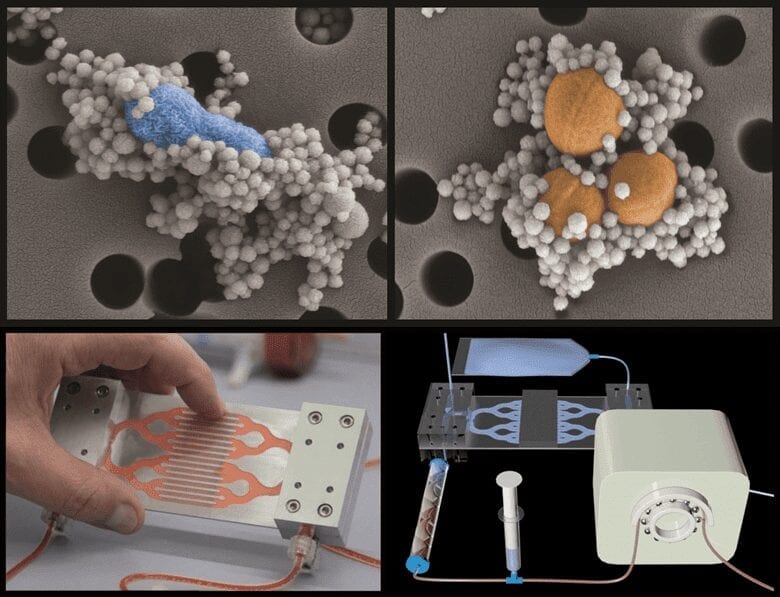

The sensor is an adaptation of a technology called GelSight, which was developed by the lab of Edward Adelson, the John and Dorothy Wilson Professor of Vision Science at MIT, and first described in 2009. The new sensor isn’t as sensitive as the original GelSight sensor, which could resolve details on the micrometer scale. But it’s smaller — small enough to fit on a robot’s gripper — and its processing algorithm is faster, so it can give the robot feedback in real time.

Industrial robots are capable of remarkable precision when the objects they’re manipulating are perfectly positioned in advance. But according to Robert Platt, an assistant professor of computer science at Northeastern and the research team’s robotics expert, for a robot taking its bearings as it goes, this type of fine-grained manipulation is unprecedented.

“People have been trying to do this for a long time,” Platt says, “and they haven’t succeeded because the sensors they’re using aren’t accurate enough and don’t have enough information to localize the pose of the object that they’re holding.”

The researchers presented their results at the International Conference on Intelligent Robots and Systems this week. The MIT team — which consists of Adelson; first author Rui Li, a PhD student; Wenzhen Yuan, a master’s student; and Mandayam Srinivasan, a senior research scientist in the Department of Mechanical Engineering — designed and built the sensor. Platt’s team at Northeastern, which included Andreas ten Pas and Nathan Roscup, developed the robotic controller and conducted the experiments.

Synesthesia

Whereas most tactile sensors use mechanical measurements to gauge mechanical forces, GelSight uses optics and computer-vision algorithms.

The Latest on: Robotic sensing

[google_news title=”” keyword=”Robotic sensing” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

via Google News

The Latest on: Robotic sensing

- Game-changing Assistive Technology: Toward Robotic Leg Control that Interacts with the Brainon May 12, 2024 at 10:07 pm

For example, when a patient walks with a robotic prosthetic leg, it is fitted with sensors, such as goniometers or inertial measurement units (IMUs). The work frequently involves the use of vision ...

- I went behind the scenes at an Amazon lab and found how robots will soon be distributing our parcelson May 12, 2024 at 10:02 pm

Drones delivering parcels to your door may sound like the stuff of science fiction, but they will be coming to the UK before the year is out, says an online retail giant.

- Meet ART: Yale's new amphibious robotic turtle that will document life off Connecticut's coaston May 12, 2024 at 2:00 am

How do you design a drone to document the environment in the coastal rough waters of Connecticut and beyond? Consider the turtle.

- Segway Navimow i105N robot lawn mower review: smarter, simpler, superior?on May 12, 2024 at 12:30 am

Segway Navimow i105N robotic lawnmower through its paces in a hands-on backyard test. Here's what it did for my ...

- Let a robot vacuum do all the work as this Eufy X9 with mop and auto-clean station drops to rock-bottom priceon May 11, 2024 at 1:55 am

As stated before, the Eufy X9 Pro can handle all types of terrain, with the ability to vacuum carpet and mop hard surfaces. But these features have become pretty common in current robot vacuums, and ...

- Dexterous robot hand can take a beating in the name of AI researchon May 10, 2024 at 6:10 am

A robotics company likely most famous for a demo of its dexterous robot hand at ICRA 2019 with Jeff Bezos has now unveiled a new robust model designed for machine learning research, which was ...

- ‘Indestructible’ 3-fingered robot hand being tested by Google’s Deepmindon May 9, 2024 at 7:16 am

Reports suggest that Googe DeepMind is using a new dexterous 3-fingered robotic hand from Shadow Robot to train AI systems for robotics.

- Robotic system feeds people with severe mobility limitationson May 9, 2024 at 7:13 am

Cornell researchers have developed a robotic feeding system that uses computer vision, machine learning and multimodal sensing to safely feed people with severe mobility limitations, including those ...

- DeepMind is experimenting with a nearly indestructible robot handon May 8, 2024 at 4:00 pm

A new robotic hand can withstand being smashed by pistons or walloped with a hammer. It was designed to survive the trial-and-error interactions required to train AI robots ...

- TechCrunch returns to Berkeley this April for TC Sessions: Robotics + AIon May 8, 2024 at 2:00 pm

That reputation began to change five years ago when the many technologies required to build robotics, notably sophisticated sensors and GPUs, became much more capable and affordable for startups, ...

via Bing News